In recent years, headlines have been rife with horror stories about the impact of artificial intelligence (AI) on human resources (HR) work. From discriminatory outcomes that led Amazon to halt the use of automated recruitment tools to several corporate data leaks involving sensitive employee information, the narratives paint a grim picture. Some experts even predict that AI, far from being a job creator, will make it harder for people to secure employment. What’s more, the challenges of educating HR professionals and the workforce they serve about AI’s intricacies loom large.

At Aspen Digital, we wanted to dig deeper. Working with the Mastercard Center for Inclusive Growth, we spoke with the experts in the field to uncover the true impact of AI on their work and the workforce they support. There are many applications of AI in HR, but to get concrete about the specific opportunities and tradeoffs, we delved into one aspect of the employee experience where HR professionals are actively considering applying these tools: worker financial security.

Financial security — the ability to live comfortably and pay necessary expenses — affects quality of life and job performance. When it comes to its intersection with emerging technologies, what we discovered surprised us: despite the ominous headlines, AI development in this space is limited and adoption rates are low. While this slow progression is driven by legitimate concerns such as automating bias and data privacy risks, that doesn’t mean that all AI is bad or will cause negative effects. What HR professionals need are resources to guide their decision-making so they can make informed choices about where technology is appropriate and where it’s not.

How is AI used in Human Resources right now?

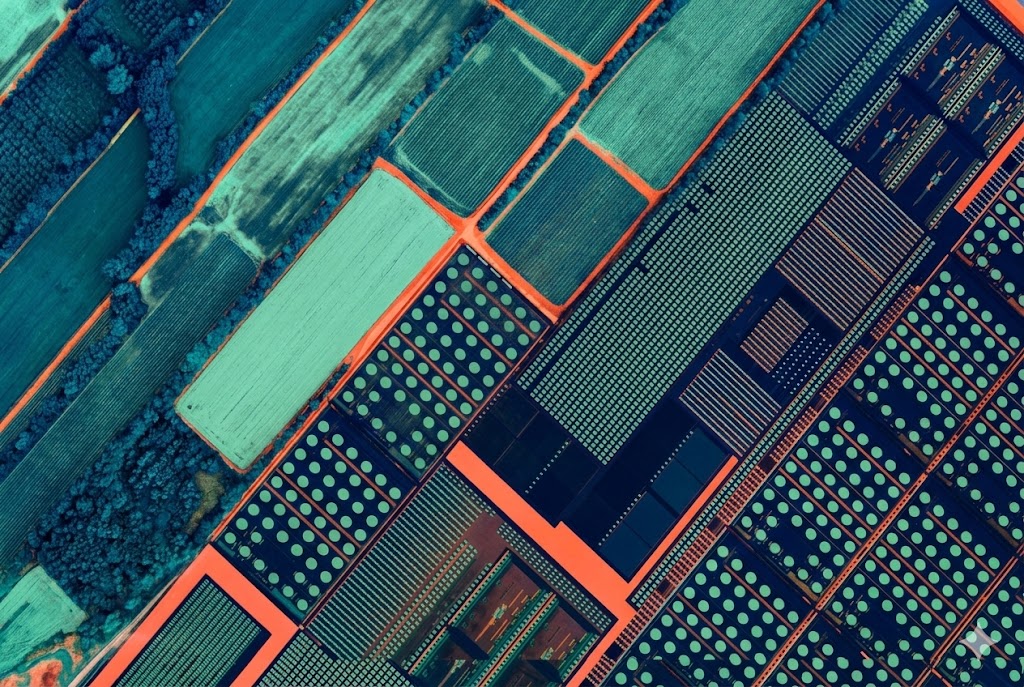

Artificial intelligence has found its way into various HR functions, with the majority of adoption happening in larger firms. Notable applications include those in hiring and recruiting as well as data and trend analysis. HR professionals are drawn to automation to sort through a pool of candidates to find the best fit, because it’s pitched as a tool that will let them focus more on screening those who are most likely to be successful.

Job applications aren’t the only place where companies have turned to automation for help. HR professionals must also sort through massive amounts of other data like employee demographics, performance, and well-being metrics. This can be made more manageable by using algorithms and predictive analysis.

Despite these couple of use cases, in our research and conversations, we found that AI tools in the context of worker financial security are nascent technologies with limited adoption.

How AI could help worker financial security

While the use cases are currently limited, artificial intelligence could serve as a helpful tool for both HR professionals and workers if approached responsibly.

Personalization

For employees to effectively utilize employer-sponsored benefits, they should suit each employee’s specific needs. For example, if an employee doesn’t have long-term savings or disposable income, they might not be able to fully benefit from services like financial planning courses. Although HR professionals are aware of the mismatch between benefits and worker needs, they aren’t always equipped to address the issue. Artificial intelligence tools can help by personalizing an employee’s use of benefits, or the benefits themselves, to fit their needs right now.

Leveraging employee data to tailor benefit offerings might mean trading off some data privacy, and that decision shouldn’t be made lightly. That said, the reality is that most people have already become much more comfortable with sacrificing our data for things like personalized news feeds, social media recommendations, and search results. A survey by Accenture Strategy even found that “more than six in 10 workers (62 percent) would exchange their work-related data for more-customized compensation, rewards, and benefits.”

If employee data is used, transparency about the use, security, and data rights are essential to responsible AI personalization.

Alternative data sources

Not all workers have a credit history, which can be a barrier to accessing financial services that can bolster financial security, such as personal loans and credit. To counteract this issue, more financial institutions are making the shift away from conventional credit models. By using supplementary data, companies have an opportunity to support employees experiencing financial instability. They can use financial data generated through their payment systems to streamline the integration of new and beneficial services, offering up options to employees they might not have had before.

For example, the financial services company Plaid recently introduced Plaid Income, which verifies income by extracting data directly from payroll documents such as pay stubs or W-2s. That information is then transmitted directly to lenders, consolidating and easing the process for applicants. Another company, the Nigerian financial inclusion startup ImaliPay, serves as a comprehensive solution for gig workers, offering access to services like ‘buy now, pay later’ (BNPL), insurance, and savings, which they might not usually have access to due to their work status or classification.

Once again, robust privacy protections are paramount in striking the right balance between helpful and harmful employee data usage.

Why isn’t there more AI adoption in worker financial security?

While there are potential benefits to employing AI, several challenges have slowed the adoption of artificial intelligence in worker financial security. These challenges include data privacy concerns, the risk of discrimination and a lack of contextual understanding, educational gaps for both HR professionals and the workforce, hurdles in tool rollout and adoption, and issues of accessibility and ownership.

“I think the external technology market is moving so fast and maybe isn’t as compliant as what we’re looking for. Trying to balance that employer responsibility over protection of employee data… It’s important to start with what companies can do internally before looking at outside services.”

– an H.R. executive at a major retailer

Moreover, different types of workers have diverse needs, with varying financial goals and communication preferences. Deskless employees don’t have the same access to technology that traditional office workers do. Lower-income workers are more worried about saving for the short-term rather than tracking retirement goals. Many are already using a patchwork of tools to manage their financial lives, making integration challenging.

“It’s really important to understand what people are using now, what works for them, and not assume that you’re going to provide them with a solution to a problem that they think they already have a solution for. Find ways to plug in without becoming a layer on top of something already working well.”

– a worker advocate

Key concerns when considering new technologies

At the beginning of 2023, Aspen Digital hosted three virtual workshops with corporate HR executives, labor activists, and fintech experts. Out of those conversations, three key themes emerged on the deployment of artificial intelligence tools for worker financial security:

1. Solution Bias

Companies should not prioritize a solution because they assume it’s needed rather than knowing it’s actually needed. Ahead of adopting a new tool, companies should ask: How is it better than what’s already out there? How will it enhance or add to what workers have already prioritized for themselves? Is there a better way?

2. Transparency

Employers should understand the business model of a tool (how they make money) as well as their data privacy practices, and then communicate all of that information to workers. They should ask: How do these services work? How will they use employee data?

3. Accessibility & Ownership

Companies should acknowledge that employees need to be part of the process, consulted in the development and the road-testing of not only the product but also the employer’s use of that product.

“Financial conversations used to be more personal and something done at home. Now the employer is more involved. For some of our employees, we are really adapting and flexing our benefits to whatever we’re hearing from them are their unmet needs and wants.”

– a corporate H.R. executive at an electronics distributor

When considering tech for worker financial security

Here’s what to check for when considering adopting automated financial service tools:

| Guiding Question | Reasoning |

|---|---|

| What was the developer’s motivation behind creating this product or service? | Examining the underlying drive behind the creation of a tool can offer valuable clues as to its suitability for your employees, for instance, whether it was founded on assumptions or on tangible employee data. |

| How have workers been involved in the development of this product or service? | It’s crucial that a developer can demonstrate concrete instances where workers were engaged, to make sure it’s both practical and beneficial. |

| What is the business model for the product or service? How does it make money? | Understanding the revenue generation strategy behind a tool can unveil potential vulnerabilities in its business model. |

| What are the developer’s data privacy practices? | As employees become increasingly vigilant about privacy and keeping personal data safe, it’s important to be discerning when incorporating new technology into organizational processes to safeguard internal company data. |

| How has the developer integrated diversity, equity, inclusion, and accessibility principles into the design, build, and implementation of the product or service? | When inclusivity is absent in the development of a tool, the resulting product excludes the employees who may need it the most. |

Final thoughts on worker financial security

The caution surrounding the adoption of artificial intelligence is understandable and laudable, given the potential pitfalls. But it doesn’t mean that innovation in worker financial security will (or should) stall completely. In the midst of contrasting narratives surrounding AI’s impact, and as technology evolves and ethical standards improve, it’s essential to maintain a human-centered approach. The experiences and needs of workers most impacted by automation should guide our conversations and decisions.

Read more about the intersections of AI and worker financial security in our recent report, and find HR resources for evaluating emerging technology.

The views represented herein are those of the author(s) and do not necessarily reflect the views of the Aspen Institute, its programs, staff, volunteers, participants, or its trustees.

Morgan McMurray joined the Aspen Digital team in September 2022 as a Google Public Policy Fellow. She now serves as a Program Associate on projects focusing on responsible innovation, data privacy, and the impact of emerging technology. She graduated in May 2023 with a Master of Public Administration from Columbia University’s School of International and Public Affairs, where she focused on technology and security policy.