Issue 3 | March 2026

In this issue

- When War Goes Synthetic: AI-generated fakes are making it harder to know what’s real in conflict zones.

- Why AI Pilots Aren’t Enough: Vilas Dhar of the Patrick J. McGovern Foundation tells Aspen Digital why it’s time for news organizations to move beyond cautious AI experimentation.

- A Journalist Sues an AI Writing Assistant: Julie Angwin’s case against Grammarly raises the question: Who owns your name and professional reputation in the age of AI?

Sign-up to receive future issues in your inbox 👉

Subscribe below

THE Big Picture

The strategic question every publisher is wrestling with right now

In the AI Era, the Fog of War is Getting Thicker

When US and Israeli forces struck Iran in late February, the information war started before the smoke cleared. Within hours, AI-generated videos of airstrikes over Tel Aviv were circulating on X and viewed millions of times, a dynamic vividly documented in a New York Times story published this month.

❓Why This Matters Now

Despite how quickly AI-generated video can now spread, reliable tools to detect AI-generated content have largely failed to materialize. Case in point: In January, NewsGuard tested whether Grok, ChatGPT, and Gemini could detect AI-generated video created by OpenAI’s Sora once watermarks were removed — and found failure rates of 95%, 92.5%, and 78%, respectively. Those watermarks, the primary safeguard AI companies point to, are easily stripped using free tools that appeared online within weeks of Sora’s launch. OpenAI’s chatbot failed to detect video created by its own tool more than nine times out of ten.

NewGuard has since identified a second failure point: Google’s AI Overviews – widely used to verify images – repeated Iran war falsehoods in four documented cases when prompted with reverse images searches, adding fabricated details that made false claims more credible, not less. In one case, Google’s AI Overview was fooled by an image generated by Google’s own image tool. When fact-checkers at Full Fact raised similar concerns about AI Overviews and conflict footage in 2025, Google acknowledged some errors but attributed them to problems with underlying search results, not AI itself, and maintained the feature rarely hallucinates.

In an interview with Aspen Digital, Isis Blachez, a staff analyst at NewsGuard, says the trajectory is clear: “The amount of AI-generated content circulating online and advancing falsehoods has notably increased with every international conflict. The content has also become more believable, as AI generation tools — accessible to a wide public and requiring little skill — produce hyper-realistic images and videos.”

📣 What’s Actually Happening

This isn’t anonymous trolls posting fakes. NewsGuard found that provably false claims from sources affiliated with the Iranian government nearly quadrupled in the days following the February 28 strikes. The playbook mirrors Russia’s in Ukraine. In both cases, AI tools let state actors produce convincing fakes faster than anyone can debunk them. And the chatbots audiences turn to for help aren’t just missing the fakes — they’ve been caught vouching for them, as the Google AI Overviews case showed. Meanwhile, most newsrooms are now covering simultaneous conflicts with the same editorial capacity they had for one.

Blachez puts it plainly: “AI-generated content spreads faster to fill information voids, as people turn to the internet when verified footage doesn’t emerge quickly enough.”

⚠️ The Stakes for the News Industry

- A trust problem with no easy fix. A newsroom that publishes false synthetic conflict content — even inadvertently — faces a credibility penalty that’s hard to recover from. The risk isn’t only getting a story wrong; it’s being seen as a conduit for the disinformation itself.

- The standard verification tools are no longer reliable. Reverse image search — a go-to step for checking conflict imagery — is now itself a source of misinformation. As NewsGuard’s research reveals, detecting AI-generated visuals requires human verification at every step, and even then, the tools don’t always get it right.

- For now, the platforms are focused on a different problem. YouTube recently expanded its AI likeness detection tool to journalists and public officials; the UK government launched a deepfake detection initiative with Microsoft; and third-party tools like Hive, Reality Defender, and Sensity are in active use by some newsrooms. Each is a meaningful step — but none addresses the synthetic imagery of war and conflict that is already reaching tens of millions of viewers unchecked.

👓 What To Watch Next

- Whether AI companies will publicly acknowledge the limits of their detection tools — and what pressure, regulatory or competitive, forces that admission..

- Whether verification standards for AI-generated conflict material – the BBC’s Verify unit is one model – gain traction in the industry.

NewsGuard’s Blachez sees no near-term relief: “New challenges will emerge as bad actors refine their tactics and adapt their campaigns to target polarizing topics.”

🔎 GO DEEPER: With verification tools now being compromised by AI, researchers at WITNESS lay out how the bedrock assumptions of open-source investigation are breaking down, and what newsrooms need to do differently. Read the Reuters Institute analysis →

signal scan

A curated round-up of how AI is reshaping news – and trust

Google Search Lead: Search and Gemini May Converge, or Diverge Further

Google’s head of Search, Liz Reid, says she doesn’t know whether Google Search and its AI chatbot Gemini will remain separate products, converge, or be replaced by an AI-agent product altogether. The outcome is being watched closely by news publishers who depend on Google as a key driver of audience discovery and traffic.

Source: Search Engine Land

BuzzFeed debuts AI Slop Apps in Bid for New Revenue

Days after disclosing “substantial doubt” about its ability to stay in business, Buzzfeed unveiled two new AI-powered consumer apps at SXSW. But the announcement fell flat, underscoring the challenges for news organizations to redefine themselves in the AI era.

Source: TechCrunch

Reuters Institute AI and the Future of News 2026: What We Learnt About AI’s Impact

The Reuters Institute annual conference brought together journalists, fact-checkers and researchers to examine how AI is reshaping newsroom practice, fact-checking at scale and how journalists cover AI. The throughline: news organizations are becoming more capable and vulnerable at the same time.

Source: Reuters Institute

Study: ChatGPT, Claude, Gemini, and Grok Are All Bad at Crediting News Outlets

A new study finds that the leading AI chatbots routinely draw from original reporting to answer news questions, but almost never tell users where that information came from. The worst offender: ChatGPT.

Source: Nieman Labs

The News/Media alliance has signed a deal with an AI startup to help its 2,200 publisher members license content to enterprise AI systems – the internal tools that legal, financial and healthcare companies use to answer employee queries.

Source: DigiDay

👂 Sound Scan

CNN, The New York Times and Reuters on AI in the Newsroom: What’s Working, What’s Next, and What’s at Stake (Apple Podcast)

AI leads from top news media organizations sit down together to assess where AI is producing real results and what it means for newsrooms still debating how aggressively to move.

Source: Newsroom Robots

Data point

An AI and News trend visualized

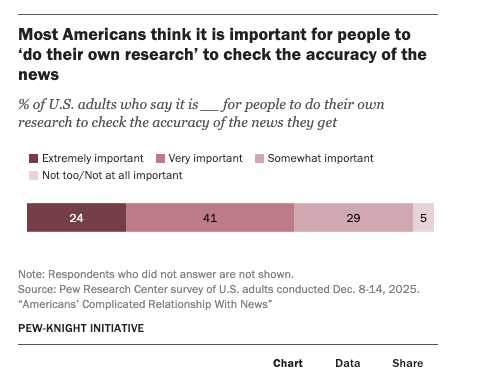

Nearly all Americans say it is at least somewhat important to do their own research to check the accuracy of the news they get. The catch: “doing your own news” means very different things to different people, and in an era of declining trust, it increasingly means questioning what major news organizations say.

primary sources

How industry leaders are thinking about AI, trust and what comes next

“Most publishers do not need another seminar on what a large language model is. They need room to think. The leaders making progress here have accepted that adaptation is part of the work now.”

-Vilas Dhar, President of the Patrick J. McGovern Foundation

As president of the Patrick J. McGovern Foundation, Vilas Dhar oversees more than $500 million committed to public purpose AI, with journalism and AI as a central focus. He speaks with Aspen Digital about why institutional inertia, not technical complexity, is the real barrier holding newsrooms back.

Aspen Digital: The McGovern Foundation has committed over $500 million to public purpose AI, with journalism and media as a key focus area. What’s the case for how AI can strengthen rather than undermine news and information?

Vilas Dhar: Journalism is being forced to live inside an AI transition before society has actually understood or decided what that transition is. That makes this a test case for more than newsroom efficiency — it’s an existential question on the foundations of our democratic institutions. A newsroom that can translate its reporting into languages it has never served, move through public records faster, or deliver information in formats that fit the way people actually live stands a better chance of reaching people when it counts, so the efficiency argument is real and meaningful. But the dangers are here too. AI is accelerating misinformation, putting more stress on an already fragile economic model, and pulling value from reporting in ways the field has not yet worked through. Navigating this complexity means media organizations have to be willing to rethink how they work in ways that serve their purpose rather than defaulting to finding local efficiencies. That requires institutional imagination and the capacity to take on risk and experimentation.

Aspen Digital: You’ve said that the greatest barrier to adoption of AI is not technical complexity but “institutional inertia.” What does that mean for publishers trying to figure out AI while under economic strain?

Vilas Dhar: Most publishers do not need another seminar on what a large language model is. They need room to think. Institutional inertia is the weight of habits, incentives, fears, and structures that keep organizations from treating a structural shift like a passing disruption and force reaction rather than proactive visioning. Economic strain makes this harder because scarcity distorts judgment. Under pressure, every technology conversation gets pulled toward cost savings. That is understandable, but it narrows the frame too quickly and leaves the possibility of transformative value creation out of the picture. The leaders making progress here have accepted that adaptation is part of the work now. They start carefully, by protecting the areas where human judgment belongs but still making enough space to learn.

Aspen Digital: You’ve described a framework where AI will move from “being done to us” to “for us” to ultimately “by us.” What would it look like for communities, not just newsrooms, to have genuine agency over how AI shapes local information?

Vilas Dhar: We spend a great deal of time asking what AI can do for newsrooms. The more interesting question is what happens when communities have the tools and standing to shape their own information systems. In India, Digital Green built FarmerChat, a multilingual AI tool now serving more than 460,000 farmers across five states. Farmers use it to ask questions about crop disease, soil health, and market conditions in their own language, with answers grounded in verified agricultural knowledge. The lesson here is more than the technical pilot or the model use — rather, these examples give us a toolkit to understand how to design new systems that reflect the needs, language, and daily reality of the people using it. That is the direction local information should move. Community organizations building tools around public records, neighborhood concerns, school systems, and city council decisions. Newsrooms still have an essential role, but they could act as organizers of a wider civic infrastructure in which communities help define the problem, shape the standards, and hold systems accountable.

Aspen Digital: Deepfakes and synthetic media create a kind of corrosive doubt. People become uncertain of everything, even authentic content. How do you see this affecting the broader information ecosystem that journalism depends on?

Vilas Dhar: The deepfake problem is often framed as a detection problem. I think the deeper damage runs below that. Synthetic media weakens the assumption that what we are seeing or hearing bears a reliable relationship to what happened. You do not need every falsehood to be believed. You only need enough uncertainty that people begin to treat reality itself as negotiable. It also expands the surface area for scams and fraud. When voices can be cloned, identities fabricated, and convincing false evidence produced at almost no cost, the harm does not stop at politics or public discourse. It reaches into banking, family life, emergency response, and the ordinary transactions that hold social trust together. That is why the response cannot sit with journalists or technology companies alone. It has to be a whole-of-society effort involving newsrooms, platforms, financial institutions, schools, law enforcement, and public agencies. I want to highlight leaders like Craig Newmark here, who have shown real leadership by helping bring together cyber, journalism, and trust practitioners around a problem no single field can solve on its own.

Aspen Digital: You’ve emphasized that technology should be guided by “hope” as a governing principle. In an industry experiencing declining trust, layoffs and significant, maybe existential, threats, what gives you hope about journalism’s future?

Vilas Dhar: I am not interested in hope as a mood. I am interested in hope as discipline — a way of turning optimism into practice that builds better futures. The need journalism serves has not gone away. In a noisier and more synthetic information environment, it may actually be harder to replace than before. But people still need ways to understand what is happening around them, to see power examined, and to make decisions with some grounding in fact. That demand remains, even if the institutions meeting it are under strain. And in that environment, I find confidence where some of the most thoughtful reinvention is happening. Local outlets, nonprofit models, and community-rooted publishers are building closer relationships with the public because they have had to be specific about their purpose. They are experimenting with products, distribution, and technology, but they are also asking a deeper question about what it means to be genuinely useful to a community now. That is a healthier place to build from than nostalgia for an older model.

Aspen Digital: What’s the one thing news executives should understand about the AI transition that they are missing?

Vilas Dhar: AI will not rescue a weak business model. It will intensify whatever logic is already shaping the institution. I’m deeply concerned when executives approach AI first as a headcount question. There are efficiencies to find, and every institution should look honestly at where time is wasted. But if the first move is to cut, you send a signal to staff and to the public about how leadership understands the work.

In the feed

One share worth a closer 👀

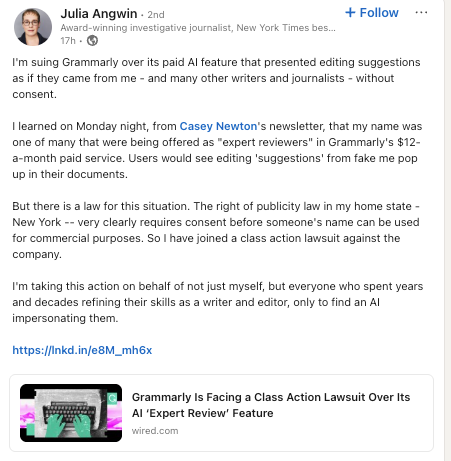

Award-winning investigative journalist Julia Angwin is leading a class action lawsuit against Grammarly for impersonating her as an AI “expert reviewer” — and she’s not alone. For journalists and editors who spent careers building credibility, the lawsuit raises a question that goes well beyond one company: who owns your professional reputation in the age of AI? (Watch the CEO of Grammarly defend the company’s use of AI-cloned “experts.“)

stay in touch

Explore our full library of research and resources, and sign up for this newsletter (if not already).